Introduction

Kubernetes objects are the fundamental persistent entities that describe the state of the Kubernetes cluster. Pods are the elementary objects and the building blocks of Kubernetes architecture.

This article will provide a comprehensive beginner's overview of Kubernetes pods. Understanding how pods work will help you get a grasp on the mechanism behind this container orchestration platform.

What Is a Kubernetes Pod?

The pod is the smallest deployment unit in Kubernetes, an abstraction layer that hosts one or more OCI-compatible containers. Pods provide containers with the environment to run in and ensure the containerized apps can access storage volumes, network, and configuration information.

Kubernetes Pods vs. Containers vs. Nodes vs. Clusters

Pods serve as a bridge that connects application containers with other higher concepts in the Kubernetes hierarchy. Here is how pods compare to other essential elements of the Kubernetes orchestration platform.

- Pods vs. Containers. A container packs all the necessary libraries, dependencies, and other resources necessary for an application to function independently. On the other hand, a pod creates a wrapper with dependencies that allow Kubernetes to manage application containers.

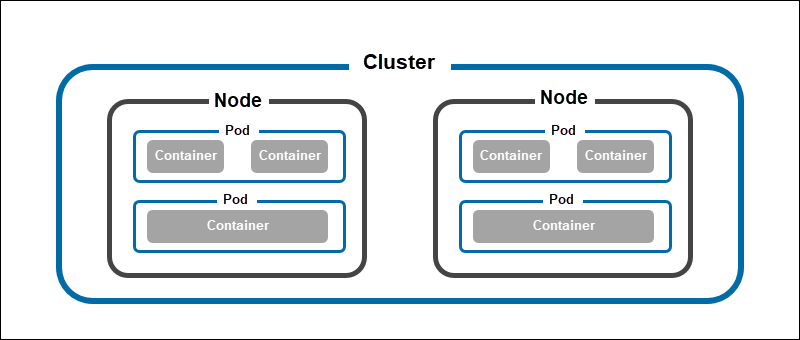

- Pods vs. Nodes. A node in Kubernetes is a concept that refers to bare metal or virtual machines that are responsible for hosting pods. A single node can run multiple container pods. While each pod must have a node to run on, not all nodes host pods. The master node features a control plane that controls pod scheduling, while pods reside on worker nodes.

- Pods vs. Cluster. A Kubernetes cluster is a group of nodes with at least one master node (high-availability clusters require more than one master) and up to 5000 worker nodes. Clusters enable pod scheduling on various nodes with different configurations and operating systems.

Note: While Kubernetes officially supports 5000 worker nodes in a cluster, resource constrictions usually lower the maximum number of nodes to 500.

Types of Pods

Based on the number of containers they hold, pods can be single-container and multi-container pods. Below is a brief overview of both pod types.

Single-Container Pods

Pods in Kubernetes most often host a single container that provides all the necessary dependencies for an application to run. Single container pods are simple to create and offer a way for Kubernetes to control individual containers indirectly.

Multi Container Pods

Multi-container pods host containers that depend on each other and share the same resources. Inside such pods, containers can establish simple network connections and access the same storage volumes. Since they are all in the same pod, Kubernetes treats them as a single unit and simplifies their management.

Benefits of Using Kubernetes Pods

The pod design is one of the main reasons Kubernetes gain popularity as a container orchestrator. By employing pods, Kubernetes can improve container performance, limit resource consumption, and ensure deployment continuity.

Here are some of the crucial benefits of Kubernetes pods:

- Container abstraction. Since a pod is an abstraction layer for the containers it hosts, Kubernetes can treat those containers as a single unit within the cluster, simplifying container management.

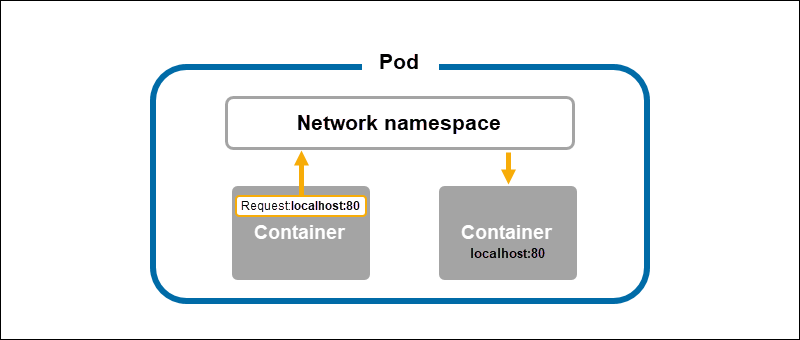

- Resource sharing. All the containers in a single pod share the same network namespace. This property ensures they can communicate via localhost which simplifies networking significantly. Aside from sharing a network, pod containers can also share storage volumes, a feature particularly useful for managing stateful applications.

- Load balancing. Pods can replicate across the cluster, and a load-balancing service can balance traffic between the replicas. Kubernetes load balancing is an easy way to expose an app to outside network traffic.

- Scalability. Kubernetes can automatically increase or decrease the number of pod replicas depending on the predetermined factors. This feature allows you to fine-tune the system to scale up or down depending on the workload.

- Health monitoring. The system conducts regular pod health checks and restarts. Additionally, Kubernetes reschedules the pods that crash or are unhealthy. Automatic health monitoring is an important factor in maintaining the application's uptime.

How Do Pods Work?

Pods run according to a set of rules defined within their Kubernetes cluster and the configuration provided upon the creation of the object that generated them. The following sections provide an overview of the most important concepts related to the life of a pod.

Lifecycle

The lifecycle of a pod depends on its purpose in the cluster and the Kubernetes object that created it.

Kubernetes objects such as jobs and cronjobs create pods that terminate after they complete a task (e.g., report generation or backup). On the other hand, objects like deployments, replica sets, daemon sets, and stateful sets generate pods that run until the user interrupts them manually.

The state of a pod at any given stage in its lifecycle is called the pod phase. There are five possible pod phases:

Pending- When a pod shows the pending status, it means that Kubernetes accepted it and that the containers that make it up are currently being prepared for running.Running- The running status signifies that Kubernetes completed the container setup and assigned the pod to a node. At least one container must be starting, restarting, or running for the status to be displayed.Succeeded- Once a pod completes a task (e.g., carrying out a job-related operation), it terminates with theSucceededstatus. This means that it stopped working and will not restart.Failed- The failed status informs the user that one or more containers in the pod terminated with a non-zero status (i.e., with an error)Unknown- The unknown pod status usually indicates a problem with the connection to the node on which the pod is running.

Aside from the phases, pods also have conditions. The possible condition types are PodScheduled, Ready, Initialized, and Unschedulable. Each type has three possible statuses: true, false, or unknown.

Logs

Kubernetes collects logs from the containers running within a pod. While each container runtime has a custom way of handling and redirecting log output, integration with Kubernetes follows the standardized CRI logging format.

Users can configure Kubernetes to rotate container logs and manage the logging directory automatically. The logs can be retrieved using a dedicated Kubernetes API feature, accessible via the kubectl logs command.

Controllers

Controllers are Kubernetes objects that create pods, monitor their health and number, and perform management actions. This includes restarting and terminating pods, creating new pod replicas, etc.

The daemon called Controller Manager is in charge of managing controllers. It uses control loops to monitor the cluster state and communicates with the API server to make the necessary changes.

The following is the list of the six most important Kubernetes controllers:

- ReplicaSet. Creates a set of pods to run the same workload.

- Deployment. Creates a configured ReplicaSet and provides additional update and rollback configurations.

- DaemonSet. Controls which nodes are in charge of running a pod.

- StatefulSet. Manages stateful applications and creates persistent storage and pods whose names persist across restarts.

- Job. Creates pods that successfully terminate after they finish a task.

- CronJob. A CronJob helps schedule Jobs.

Templates

In their YAML configurations, Kubernetes controllers feature specifications called pod templates. Templates describe which containers and volumes a pod should run. Controllers use templates whenever they need to create new pods.

Users update pod configuration by changing the parameters specified in the PodTemplate field of a controller.

Networking

Each pod in a Kubernetes cluster receives a unique cluster IP address. The containers within that pod share this address, alongside the network namespace and ports. This setup enables them to communicate using localhost.

If a container from one pod wants to communicate with a container from another pod in the cluster, it needs to use IP networking. Pods feature a virtual ethernet connection that connects to the virtual ethernet device on the node and creates a tunnel for the pod network inside the node.

Note: Read our Kubernetes Networking Guide to understand how communication between various Kubernetes components works.

Storage

Pod data is stored in volumes, storage directories accessible by all the containers within the pod. There are two main types of storage volumes:

- Persistent volumes persist across pod failures. The volumes' lifecycle is managed by the PersistentVolume subsystem, and it is independent of the lifecycle of the related pods.

- Ephemeral volumes are destroyed alongside the pod that used them.

The user specifies the volumes that the pod should use in a separate YAML file.

Note: Read more about persistent volumes in our introduction to Kubernetes Persistent Volumes.

Working with Kubernetes Pods

Users interact with pods using kubectl, a set of commands for controlling Kubernetes clusters by forwarding HTTP requests to the API.

The following sections list some of the most common pod management operations.

Pod OS

Users can set the operating system a pod should run on. Currently, Linux and Windows are the only two supported operating systems.

Specify the operating system (linux or windows) for your pods in the .spec.os.name field of the YAML declaration. Kubernetes will not run pods on the nodes that do not satisfy the Pod OS criterion.

Create Pods Directly

While creating pods directly from the command line is useful for testing purposes, it is not a recommended practice.

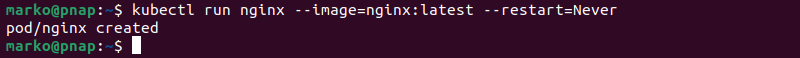

To manually create a pod, use the kubectl run command:

kubectl run [pod-name] --image=[container-image] --restart=NeverThe --restart=Never option prevents the pod from continuously trying to restart, which would cause a crash loop. The example below shows an Nginx pod created with kubectl run.

Deploy Pods

The recommended way of creating pods is through workload resources (deployments, replica sets, etc.). For example, the following YAML file creates an Nginx deployment with five pod replicas. Each pod has a single container running the latest nginx image.

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

spec:

selector:

matchLabels:

app: nginx

replicas: 5

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:latest

ports:

- containerPort: 80

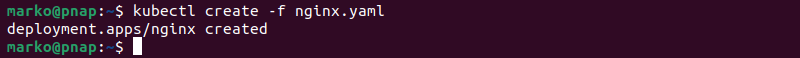

To create pods from the YAML file, use the kubectl create command:

kubectl create -f [yaml-file]

Update or Replace Pods

Some pod specifications, such as metadata and names, are immutable after Kubernetes creates a pod. To make changes, you need to modify the pod template and create new pods with the desired characteristics.

List Pods

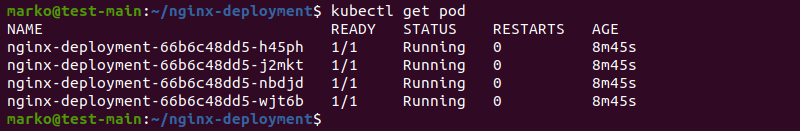

See the available pods by using the following command:

kubectl get podThe output shows the list of pods in the current namespace, alongside their status, age, and other info.

Restart Pods

There is no direct way to restart a pod in Kubernetes using kubectl. However, there are three workarounds available:

- Rolling restart is the fastest available method. Kubernetes performs a step-by-step shutdown and restart of each container in a deployment.

- Changing an environment variable forces pods to restart and synchronize with the changes.

- Scaling the replicas to zero, then back to the desired number.

Note: For more information about the three pod restart methods mentioned above, refer to How to Restart Kubernetes Pods.

Delete Pods

Kubernetes automatically deletes pods after they complete their lifecycle. Each pod that gets deleted receives 30 seconds to terminate gracefully.

You can also delete a pod via the command line by passing the YAML file containing pod specifications to kubectl delete:

kubectl delete -f [yaml-file]This command overrides the grace period for pod termination and immediately removes the pod from the cluster.

View Pod Logs

The kubectl logs command allows the user to see the logs for a specific pod.

kubectl logs [pod-name]Assign Pods to Nodes

Kubernetes automatically decides which nodes will host which pods based on the specification provided on creating the workload resource. However, there are two ways a user can influence the choice of a node:

- Using the

nodeSelectorfield in the YAML file allows you to select specific nodes. - Creating a DaemonSet resource provides a way to overcome the scheduling limitations and ensure a specific app gets deployed on all the cluster nodes.

Monitor Pods

Collecting data from individual pods is useful for getting a clear picture of the cluster's health. The essential pod data to monitor include:

- Total pod instances. This parameter helps ensure high availability.

- The actual number of pod instances vs. the expected number of pod instances. Helps in creating resource redistribution tactics.

- Pod deployment health. Identifies misconfigurations and problems with the distribution of pods to nodes.

Note: Learn more about cluster monitoring in Kubernetes Monitoring Best Practices.

Conclusion

This article provided a comprehensive overview of Kubernetes pods for beginner users of this popular orchestration platform. After reading the article, you should know what pods are, how they work, and how they are managed.